Context.md - start building your AI memory

The real question is not what model you are using.

In journalism, context is not background. It is what makes the story. It determines what matters, what is noise, and what can be said with authority.

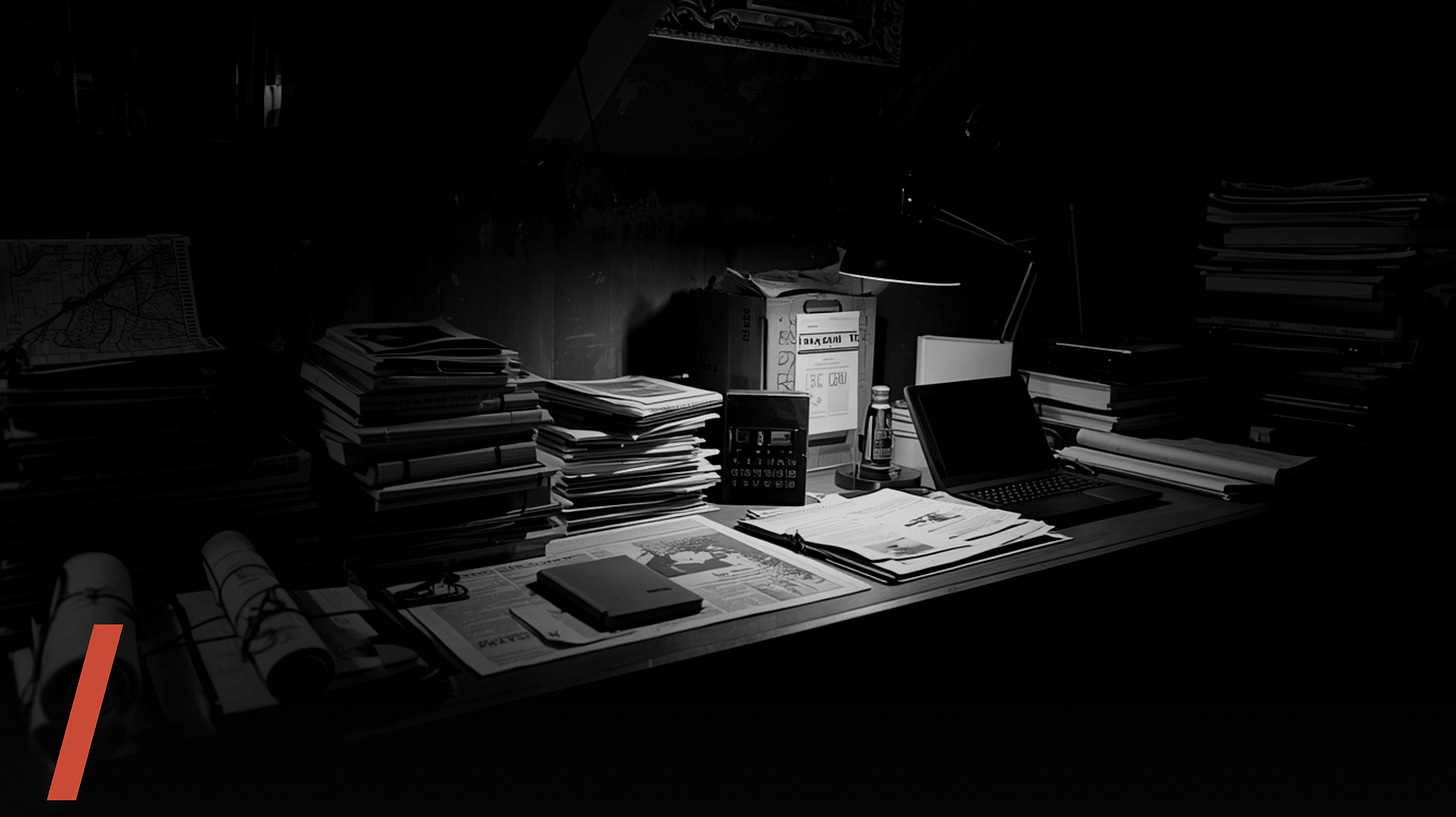

The reader’s context - the baseline you are writing into - is the minimum understanding you need before a single line gets filed. Send a reporter somewhere they do not understand, and you get a press release. Send them in carrying years of reading, sources, maps, transcripts, and prior coverage, and they return with something no one else can produce.

That same rule now governs how AI actually performs.

CHAT HAS NO MEMORY

Most newsrooms still treat AI like a day-one intern. Assign a task. Provide minimal briefing. Hope for a usable draft. That is chat. Chat has no memory. Every new window is a blank room. No history, no accumulation, no edge.

The constraint is not the model. It is context. Which is just another word for memory.

“The constraint is not the model. It is context. Which is just another word for memory.”

I CHANGED THE ARCHITECTURE

I spent a year working the wrong layer - following the industry’s shallow adoption curve. Polishing sentences. Drafting summaries. Translating copy. None of it compounded. Pro plans cap attachments. Gems hold a handful of files. Close the tab and the system forgets who you are.

So I changed the architecture.

I stopped paying for tools that did not accumulate knowledge. I stopped treating AI as a conversational interface. I started building a memory layer.

Over several months, I indexed a 3TB archive of journalism research: investigations, campaigns, case studies, newsgames, immersive formats. That archive now sits behind every task I run. Prompts stay short because the context is already in the room. The system does not guess. It retrieves.

STANDARDS ARE CATCHING UP

Google’s Stitch now reads DESIGN.md - an emerging open spec for encoding brand systems I had already written mine. The moment Stitch could parse it, it stopped being a demo and started generating whole interfaces in my brand, without me opening Figma.

From the opposite direction, Claude Cowork collapses the boundary between tool and workspace. Instead of exporting context into prompts, the agent operates inside your filesystem - reading folder-level instructions, connecting to Notion, Slack, Drive, working inside your context instead of asking you to paste it into a chat every time.

DESIGN.md pushes context out. Cowork pulls the agent in. Either direction, the principle is the same. The memory layer wins.

“DESIGN.md pushes context out. Cowork pulls the agent in. The memory layer wins.”

ONE PERSON, STUDIO OUTPUT

With that in place, one person can do the work of a studio. Projects that used to queue behind design and code cycles now ship the same day. This week I will publish a newsgame built in under 20 hours - on top of a memory of 150+ games I had already studied. The production was fast because the thinking was already done.

HOURS ON EXECUTION.

MONTHS ON MEMORY.

THE NEW UNIT ECONOMICS OF A SINGLE REPORTER

THE MISSING LAYER

What is missing from most newsroom conversations is this layer. Industry reports still frame AI as transcription, summarisation, content placement. Useful. Not transformative. They document usage, not capability.

The sequence that works is structural. Foundation first. Memory and context. Skills and routines. Then the work on top. Not the other way around.

FROM ORNAMENT TO MOAT

Once the foundation is solid, you stop competing on work every model can now generate, and start doing what no model can. Work grounded in a specific, accumulated, opinionated context. Journalism with a body. Journalism with a room. Journalism that carries a signature the system can assist but not replicate.

That is where artistic journalism moves - from ornament to moat.

The real question is not what model you are using. It is what memory you are building - and how your stack lets it compound.